Automatic Prompt Engineer (APE)

Overview

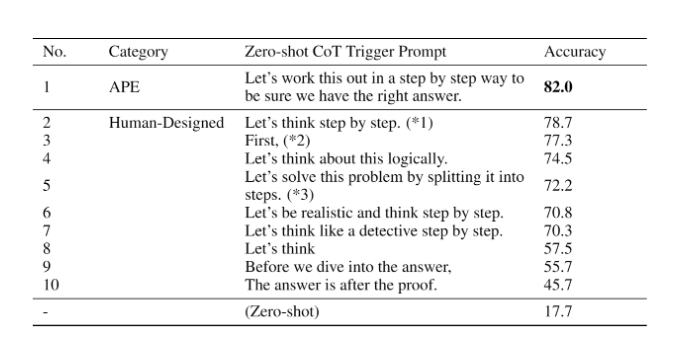

Zhou et al., (2022) propose automatic prompt engineer (APE) a framework for automatic instruction generation and selection. The instruction generation problem is framed as natural language synthesis addressed as a black-box optimization problem using LLMs to generate and search over candidate solutions.

Image Source: Zhou et al., (2022)

How It Works

The first step involves a large language model (as an inference model) that is given output demonstrations to generate instruction candidates for a task. These candidate solutions will guide the search procedure. The instructions are executed using a target model, and then the most appropriate instruction is selected based on computed evaluation scores.

Key Discovery

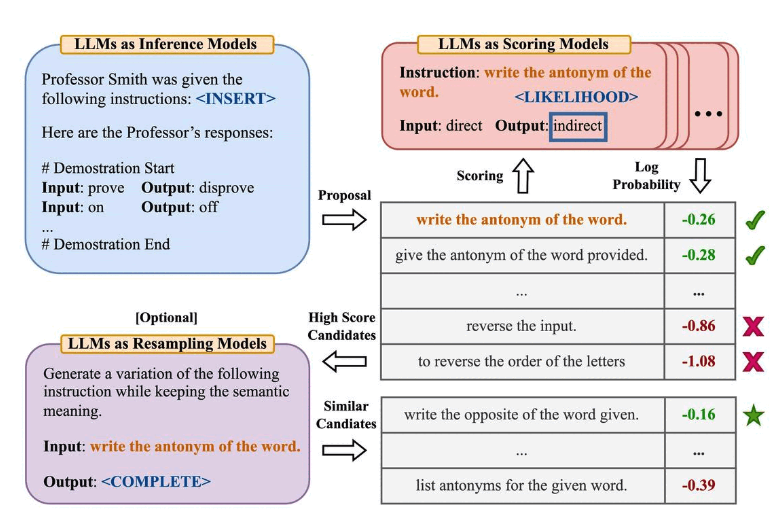

APE discovers a better zero-shot CoT prompt than the human engineered "Let's think step by step" prompt (Kojima et al., 2022).

The prompt "Let's work this out in a step by step way to be sure we have the right answer." elicits chain-of-thought reasoning and improves performance on the MultiArith and GSM8K benchmarks:

Image Source: Zhou et al., (2022)

Related Research

This paper touches on an important topic related to prompt engineering which is the idea of automatically optimizing prompts. While we don't go deep into this topic in this guide, here are a few key papers if you are interested in the topic:

- Prompt-OIRL - proposes to use offline inverse reinforcement learning to generate query-dependent prompts.

- OPRO - introduces the idea of using LLMs to optimize prompts: let LLMs "Take a deep breath" improves the performance on math problems.

- AutoPrompt - proposes an approach to automatically create prompts for a diverse set of tasks based on gradient-guided search.

- Prefix Tuning - a lightweight alternative to fine-tuning that prepends a trainable continuous prefix for NLG tasks.

- Prompt Tuning - proposes a mechanism for learning soft prompts through backpropagation.

Key Benefits

- Automated Optimization: Eliminates manual prompt engineering effort

- Performance Improvement: Discovers better prompts than human-designed ones

- Scalable Approach: Can be applied to various tasks and domains

- Black-Box Optimization: Works with any LLM without requiring model access

Applications

- Chain-of-thought prompting optimization

- Instruction generation for specific tasks

- Automated prompt discovery

- Performance improvement on reasoning benchmarks

Related Topics

- Chain-of-Thought Prompting - Understanding CoT prompting techniques

- Zero-Shot Prompting - Prompting without examples

- Prompt Engineering Guide - General prompt engineering techniques

References

- Zhou et al., (2022) - Large Language Models are Human-Level Prompt Engineers